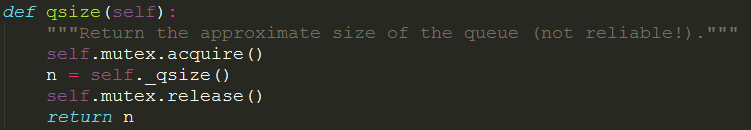

Item = queue. NUM_QUEUE_ITEMS = 20 # so really 40, because hello and world are processed separately After that, it tries to get an other item from the queue, waiting again if nothing is available.Īdded some code (submitting "None" to the queue) to nicely shut down the worker threads, and added code to close and join the_queue and the_pool: import multiprocessing After creating the Python multiprocessing queue, you can use it to pass data between two or more processes. You can see that a Python multiprocessing queue has been created in the memory at the given location. When a data is available one of the waiting workers get that item and starts to process it. Output: The multiprocessing Queue is: < object at 0x7fa48f038070>.Workers will block if nothing is ready to process.Īt startup all 3 process will sleep until the queue is fed with some data. It is a simple loop getting a new item from the queue on each iteration. Each child executes the worker_main function. This will spawn 3 processes (in addition of the parent process). The Python multiprocessing module uses the shared queue data structure to allow.

The_pool = multiprocessing.Pool(3, worker_main,(the_queue,)) The queue is shared in that each process has access to the same queue structure. Time.sleep(1) # simulate a "long" operation If your not familiar with that, you could try to "play" with that simple program: import multiprocessing This is opposed to other queue types such as last-in, first-out and priority queues. The first items added to the queue will be the first items retrieved. You could use the blocking capabilities of queue to spawn multiple process at startup (using multiprocessing.Pool) and letting them sleep until some data are available on the queue to process. The multiprocessing.Queue provides a first-in, first-out FIFO queue, which means that the items are retrieved from the queue in the order they were added. However, a lot of times my queue is empty, and it can be filled by 300 items in a second, so I'm not too sure how to do things here. I wondered if I was not better suited using a Pool of process. However that leads to tons of problems and errors. #print str(p.pid) + " job dead, starting new one" This function will read from and write to the Queue. Define the worker function that you would like to run in parallel. # We circle through each of the process, until we find one free only then leave the loop Here’s how you can use a multiprocessing queue in Python: 1. # If we already have process started we need to clear the old process in our pool and start new ones # if we didn't launched any process yet, we need to do so So far I managed to achieve this "manually" like this: while 1:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed